.avif)

On-Demand Webinar

Discover Intenseye’s new Sentinel device lineup and the future of Physical of AI.

Watch Webinar

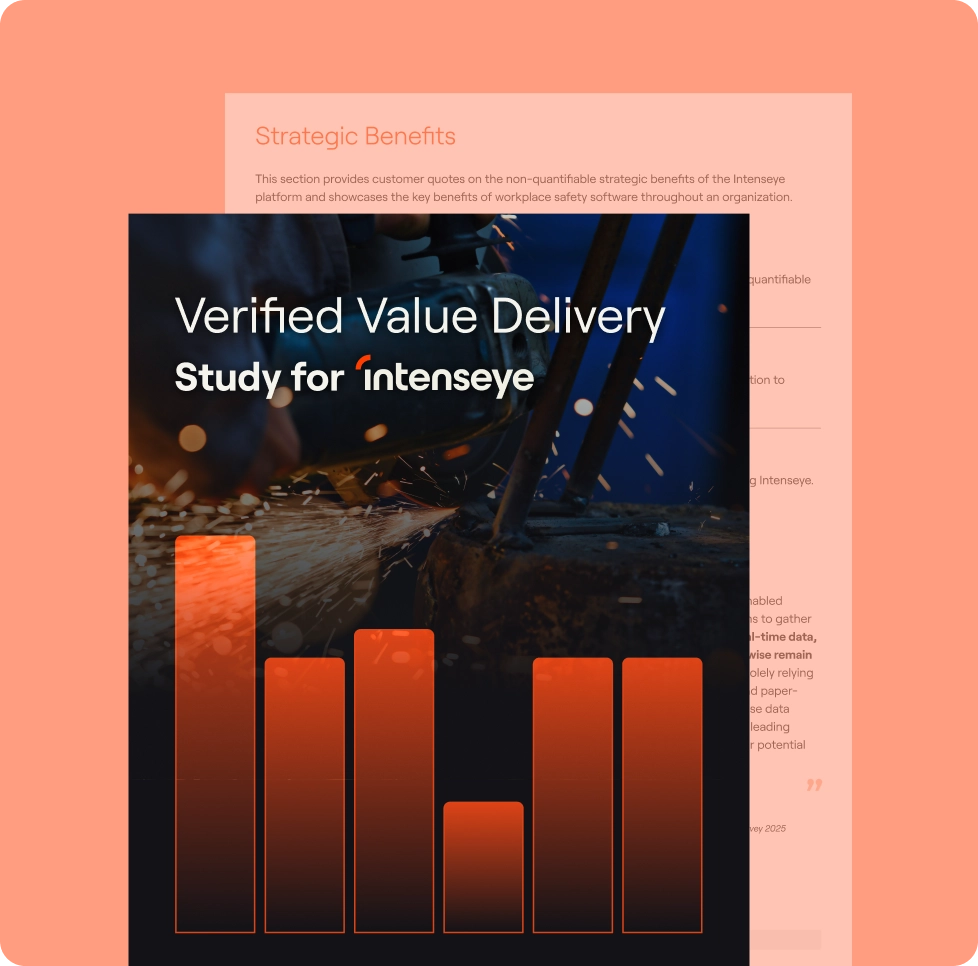

ROI Playbook

Value Delivery Study

Read more

Podcast

Stay Safe with AI: Michael Toffel

Listen

Press

Why manufacturing companies now see safe workplaces as a competitive advantage

Read more

.webp)