Ethical and unrivalled safety AI meets enterprise-ready software

True AI, enterprise-ready

Intenseye's EHS management software epitomizes 'true AI' – delivering an out-of-the-box, comprehensive, and enterprise-ready solution. Our technology is built to integrate seamlessly into your existing systems, offering the most extensive suite of AI models in the industry.

End-to-end, in house expertise

Our commitment to an end-to-end solution means we handle everything internally – from initial development to ongoing improvements. By not relying on third-party labelling solutions, Intenseye ensures a streamlined, efficient, and self-sufficient AI operation.

Unmatched innovation and accuracy

We set the standard for AI-powered safety solutions. With 22 billion frames across the globe processed by our AI models every single day, our speed of innovation thanks to data diversity is unmatched.

DATA PRIVACY

Commitment to ethical AI

Powered by an ever-growing diverse global dataset

Every day, our AI systems process an extraordinary 22 billion frames, drawing from a vast, ever-growing global dataset. This unparalleled data volume fuels our continuous advancements, ensuring our AI solutions are not only unmatched but also adhere to the highest ethical standards. Our ethical AI development is rigorously overseen by Fortune 500 customers and third-party validators, guaranteeing responsible and fair AI practices in every aspect of our technology.

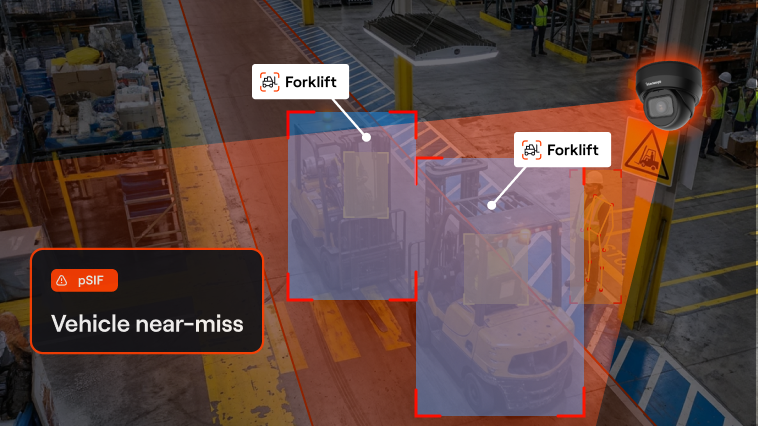

AI DEMO

Ethical AI, privacy by default and by design

Deployment Types

Flexible deployment to fit your enterprise needs

Choose from our versatile deployment options, designed for scalability and security to align with your business infrastructure.

Seamless cloud integration: Agility and efficiency

01.

Opt for our cloud-based solution to leverage the speed and flexibility of the cloud. Enjoy automatic updates, scalable resources, and a hassle-free maintenance experience, all while ensuring top-notch security and data protection.

Hybrid on-premise solution: Tailored and secure

02.

Combine the best of both worlds with our hybrid on-premise deployment. This option allows you to maintain critical data on-site while utilizing the cloud for scalability and accessibility, ensuring a customized fit for your specific enterprise requirements.

CAMERA INTEGRATIONS

Universal camera compatibility: Seamless integration with your existing infrastructure

Intenseye's platform is designed to work harmoniously with 90% of IP cameras globally.

Our software effortlessly connects to your existing camera infrastructure, supporting a broad range of models and brands. Thanks to our compatibility with h.264 video encoding and RTSP streaming protocol – standards adopted by all major camera manufacturers – integration is straightforward. If your CCTV system uses IP cameras connected to a network, chances are high that Intenseye will seamlessly integrate with your setup, ensuring minimal disruption and maximum efficiency in deploying our AI solutions.

Robust security in our software architecture: Encryption at rest and in transit

APIS

Streamlined enterprise integrations with Intenseye APIs

Our APIs are designed for seamless integration with your existing enterprise systems, facilitating efficient data exchange and enhanced functionality. Whether it's integrating with your existing EHS management systems, HR platforms, or any other enterprise software, our flexible API architecture ensures a smooth, secure, and scalable integration process. Empower your organization with Intenseye's advanced AI capabilities, optimized for the enterprise environment.

FROM OUR BLOG